Steve Nguyen

Table of Contents

Ongoing robotics projects

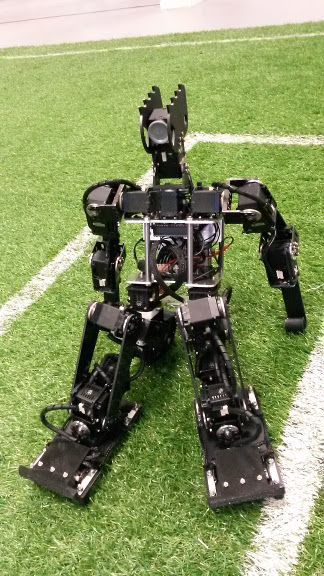

RoboCup Humanoid kid-size league

The RoboCup is an international robotics competition gathering around 3500 participants from 50 countries. The aim is to promote and push forward Robotics and Artificial Intelligence by offering a publicly appealing challenge. The long term goal of the RoboCup is to see a fully autonomous soccer team of robots beat the "human" world champion team in 2050.

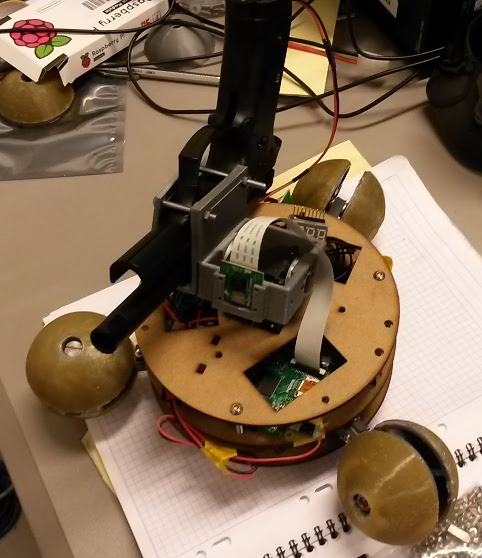

I participate with the Rhoban team in the Kid-size Humanoid soccer league since 2015. I am involved in the robot conception and fabrication, vision system and localization.

I conceived and built the last version of the SigmaBan platform with which we won the 2017 competition along with the Best Humanoid Award. The mechanical design is open-source, the Autodesk Inventor files are avaiables here.

SigmaBan platform (2017 version) |

It is important to note that these robots are far from the ideal purely rigid mechanical model. The motors are not powerful enough to perfectly follow the trajectories, the environment is rather "natural" and uncontrolled (the ground is made of artificial grass)… Consequently the control and perception of this kind of robot require some abilities to adapt and deal with uncertainties. In particular I am interested in the learning of the robot's model and its control law.

RoboCup Humanoid Adult-size league (work in progress)

We started to implement an adult-size humanoid robot (~1.5m). The engineering problematic is very different from the kid-size (~65cm) and we decided to use an Harmonic drive reducer based approach. The low level controller communication will use the modern Ethercat bus. We want to equip the whole robot with custom torque sensors along with security clutches. The aim being to make the robot able to withstand a fall, which is generally not the case for this kind of robot.

CAO of a test integration of the two legs |

Prototype |

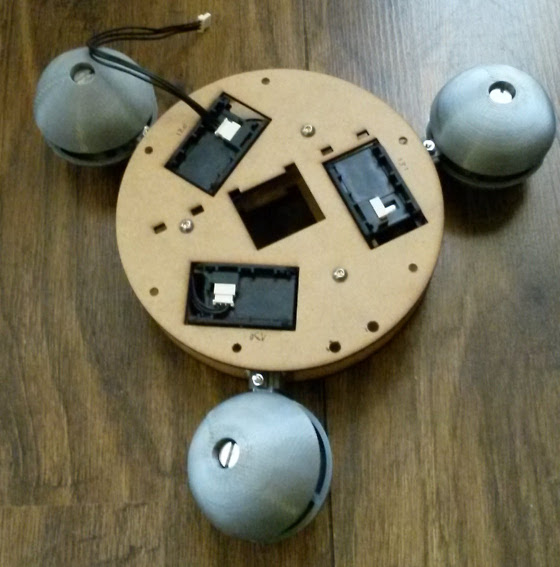

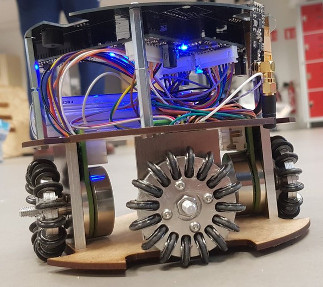

RoboCup SSL

I was part of the NAMeC team where I mainly designed the mechanics of the robot.

First prototype of our SSL robot |

Agricultural robot (BipBip)

As part of the ANR Rose Project I worked on E-Tract (autonomous electric tractor) where I mainly developed the control and navigation system (vision and GPS based), and on BipBip (the smart autonomous weeding tool) where I worked on control and sensor integration.

E-Tract and the BipBip tool |

Past projects

Thesis project: Psikharpax

As you probably don't want to read my PhD thesis (in french), here is a short summary:

The Psikharpax1 project's objective was to produce an "artificial rat" by integrating all the models of rat's brain into a single robot. The aim was test the validity of our knowledge about rat's brain and to improve autonomy of robots. Psikharpax was a very representative project of the Animat2 bottom-up approach.

The ability to sense and act in a real environment is crucial for intelligence. My work in this project was then halfway between robotics and neuroscience: I developed the sensory system, including tactile (with a new artificial whiskers sensor), audition and vision along with the multi-sensory integration.

Tactile

Whiskers – or vibrissae – are very important for rats. Indeed, usually living in narrow and dark environments they highly rely on this very rich tactile sensor. Using them, rats can recognize shapes, discriminate fine textures, estimate gap length or aperture size. They are often compared to human fingertips in terms of haptic abilities. This sensory modality is also largely studied in biology as the pathway from individual vibrissa to the somatosensory cortex (the "Barrel cortex") is known quite precisely.

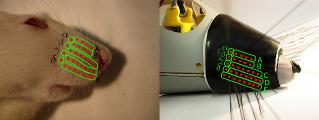

However, quite few work has been done in robotics to try to exploit such a rich sensor. So I developed a new tactile sensor based on conductive elastomer reproducing the stereotyped organization of rat's whisker pad.

Rat's whiskers and their artificial counterparts |

I also developed a bio-inspired model for texture feature extraction. This model allowed for texture recognition with very high performance. With this system I was able to explore the effect of whiskers organization (in particular the size gradient) on texture recognition. The model I proposed may help to reconcile two opposing theories in biology about the coding of textures, the frequency coding (resonance hypothesis) and the temporal coding (kinetic signature hypothesis).

See my papers on this subject: N'Guyen et al. 2009, N'Guyen et al. 2010, N'Guyen et al. 2011

Audition

The auditory system was developed in collaboration with Mathieu Bernard.

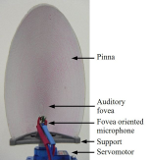

We proposed a bio-inspired model that allowed to relate audition and tactile. This system comprises a classical cochlear model (Lyon's model) in order to extract multi-channel "spikes" from sound. Then, inter-aural time difference (ITD) and inter-aural level difference (ILD) were implemented for each of these channels. Using this model and by taking advantage of the mobile pinnea of the robot, we were able efficiently separate and locate sound sources.

Close view of the artificial ear |

Moreover, based on the idea that tactile and auditory information are in fact quite homogeneous (at least for "texture") we were able to use the same texture recognition model for audition and tactile. This idea may in fact have some biological relevance as cross-sensory interference between tactile and audition have been observed in humans suggesting a common cortical area responsible for both.

See our papers: Bernard et al. 2010b, Bernard et al. 2010a

Vision

The vision system was based on a real-time hardware processing chip developed by the company BVS (which funded my PhD). This module mimics the human visual system with several filtering layers (color, edges, movements…) along with tracking units. A low-level visual layer was developed using this chip, allowing for the detection, tracking and "features" extraction of objects in the environment.

Psikharpax in front of a screen during a saccade experiment |

Then I developed a saccadic system able to select and learn visual targets. This system integrated a Superior Colliculus model (responsible of the visual to motor transformation) along with a Basal Ganglia model (responsible for selection and reinforcement learning).

See our papers: N'guyen et al. 2010a, N'Guyen et al. 2013a (about a more complete model of saccadic circuitry, coming soon)

Multi-sensory integration

Finally all these sensory modalities were integrated by the projections to the Superior Colliculus. Indeed this structure is known to be a primary center for multi-sensory integration. It contains not only visual information but also retino-centric maps for other modalities including somatosensory (tactile), audition, smell… For each modality, perceived objects features were selected and learned by parallel Basal Ganglia loops. The resulting multi-sensory model was then able to trigger orienting behavior depending on conjunction of rewarded multi-sensory cues.

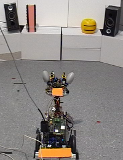

Psikharpax in a visuo-auditive orienting task |

Psikharpax in a tactile-visuo-auditive discrimination task |

Unfortunately I still didn't have time to write a paper about the multi-sensory integration, but several papers were done about using the whole system for navigation: Caluwaerts et al. 2011, Caluwaerts et al. 2012b, Caluwaerts et al. 2012a

Misc

Robots I made or played with

Psikharpax

A.k.a. the artificial rat, my PhD thesis project (see below).

Psikharpax v1 |

At a the VES parisian exposition |

Live tactile texture recognition at the VES in front of iCub |

Psikharpax v2 with lift up and grasping capability (design by Mr.QQ) |

Nao

SABIAN

Developed by the Italian Biorobotics Institute (SSSA), based on the WABIAN robot from Waseda University. I had opportunity to make some experiments on this robot in collaboration with SSSA in the RoboSoM project.

SABIAN |

Poppy and Jack

Poppy is a new custom small sized and low cost humanoid robot developed by Matthieu Lapeyre from the INRIA Flowers team. It is still in development and we are implementing probabilistic control on it. I have modified it from the original design in order to add another degree of freedom, different feet and various sensors.

Jack is another small low cost humanoid based on Robotis Dynamixel motors. It is inspired by the Acroban robot from INRIA and is intended for experimentations on compliance.

Poppy |

My Poppy after some modifications |

Jack |

Jack again |

SigmaBan and GrosBan

Videos

My youtube channel: [https://www.youtube.com/user/SteveNguyen000]

Here are a few links to videos of some of my work on Psikharpax:

A demo I made on Nao with equilibrium perception:

A barely successful attempt to make Poppy walk:

A small summary of the Robocup 2016:

Homebrewing

I am also an amateur brewer since 2006. Check our brewery website (in french) La Pinte au Bossu.

Footnotes:

The Psikharpax name (literally "crumble stealer") comes from the grec poem Batrachomyomachia ("The Battle of Frogs and Mice"), a parody of the Iliad, were he is the king of rats.

Compression of "animal" and "artificial". Scholarpedia link